This post is one of a series about my journey in learning Kubernetes from the perspective of a total n00b. Feel free to suggest topics in the comments. For more posts in this series, click this link.

In the previous post of this series, I finally got a K8s cluster installed using kubectl. But, there were some curiosities…

Unexpected (and hidden) surprises

First of all, it looks like the scripts I used interacted with my Google Cloud account and created a bunch of stuff for me – which is fine – but I was kind of hoping to create an on-prem instance of Kubernetes. Instead, the scripts spun up all the stuff I needed in the cloud. I can see that I’m already using compute, which means I’ll be using $$.

When I go to the “Compute Engine” section of my project, I see 4 VMs:

Those seem to have been spun off from the instance templates that also got added:

Along with those VMs are several disks used by the VM instances:

Those aren’t the only places I might start to see costs creep up on this project. That deployment also created networks and firewall rules. I scrolled down the left menu to find “VPC networks” and I find several external IP addresses and firewall rules.

When I look at the “Billing” overview on the main page, it says there are no estimated charges, which is weird, because I know using stuff in the cloud isn’t free:

When I click on “View detailed charges” I see the “fine print” – I’ve used $12.37 in my credits in the past few days:

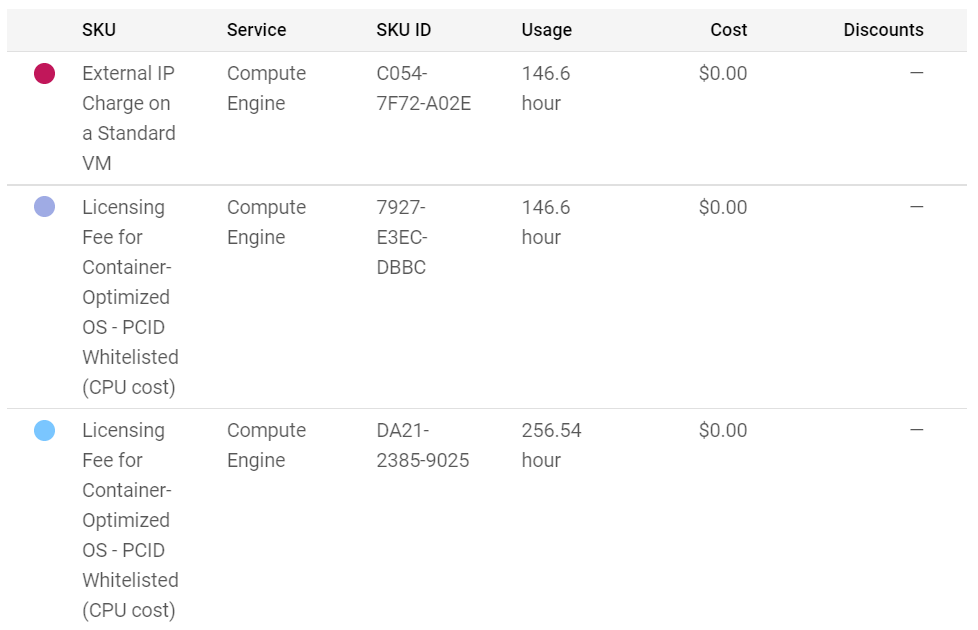

So, it wasn’t immediately apparent, but if I leave these VMs running, I will eat through my $300 credit faster than the 90 days they give. And when you scroll down, it gives more of an indication of what is being charged, but they all read $0.

That’s because there’s an option to show “Promotions and others” on the right-hand “Filters” menu. I uncheck that, and I get the *real* story of what is being charged.

So, if I hadn’t dove deeper into the costs, this learning exercise would have started to get expensive. But that’s ok – that’s a lesson learned. Maybe not about Kubernetes or containers, but about cost… and about where these types of deployments are going.

Cloud residency

When I started this exercise, I wanted to deploy an on-prem instance to learn more about how Kubernetes works. And I still may try to accomplish that, but it’s apparent that the kubectl method found in the Kubernetes docs isn’t the way to do that. The get-kube.sh and other scripts create cloud VM instances and all the necessary pieces of a full K8s cluster, which is great for simplicity and shows that the future for K8S is a fully managed platform as a service, hosted in the cloud. That’s why things like Azure Kubernetes Services, Google Kubernetes Engine, AmazonEKS, RedHat OpenShift and others exist – to make this journey simple and effective for administrators that don’t have expertise to create their own Kubernetes clusters/container management systems.

And to that end, managed storage and backup services like NetApp Astra add simplicity and reliability to the mix. As I continue on this learning exercise, I am seeing more and more why that is, and why things like OpenStack didn’t take off like they were supposed to. Kubernetes seems to have learned the lessons OpenStack didn’t – that complexity and lack of a managed service offering reduces accessibility to the platform, regardless of how many problems it may solve.

But I’ll cover that in future posts. This series is still about learning K8S. And I still can’t access the management plane on my K8S cluster due to the error mentioned in the previous post:

User verboten!

So, in this case, when I tried to access the IP address for the K8S dashboard, I was getting denied access. After googling a bit and trying a few different things, the answer became apparent – I had a cert issue.

The first lead I got was this StackOverflow post:

There, I found some useful things to try, such as accessing /readyz and /version (which both worked for me). But it didn’t solve my issue. Even the original poster had given up and started over.

So I kept googling and came across my answer here:

https://jhooq.com/message-services-https-kubernetes-dashboard-is-forbidden-user/

Turns out, I had to create and import a certificate to the web browser. The post above references vagrant as the deployment, which wasn’t what I used, but the steps all worked for me once I found the .kube/config location.

Once I did that, I could see the list of paths available, which is useful, but wasn’t exactly what I thought accessing the IP address would give me. I thought there would be an actual dashboard! I did find that things like /logs and /metrics wouldn’t work without the cert, so at least I can access those now. But I suspect the managed services will have more robust ways to manage the clusters than a bunch of REST calls.

Progress?

So, I got a bit farther now and am starting to unpeel this onion, but there’s still a lot more to learn. I’m starting to wonder if I need to back up and start with some sort of Kubernetes training to learn a bit more before I try this again. One thing I do know is that I need to kill this Kubernetes instance in GCP before it kills my wallet! 🙂

Lessons learned for me in this post:

- Setting up your own K8S cluster is a bit more of a challenge than I had anticipated

- Learning the basics ahead of time might be the best approach

- Managed Kubernetes is likely going to be the answer to the “how should I deploy” except in specific circumstances

- K8S out of the box is missing some functionality that would be pretty useful, such as a GUI dashboard

- Pay attention to what actually gets deployed – and where – and dig into your billing!

Feel free to add your comments or thoughts below and stay tuned for the next post in this series (topic TBD).

Pingback: A Year in Review: 2022 Highlights | Why Is The Internet Broken?

Pingback: New Year, New Role! | Why Is The Internet Broken?